Exciting news from the world of AI and VR!

Hand tracking for immersive reality is stepping far forward thanks to machine learning

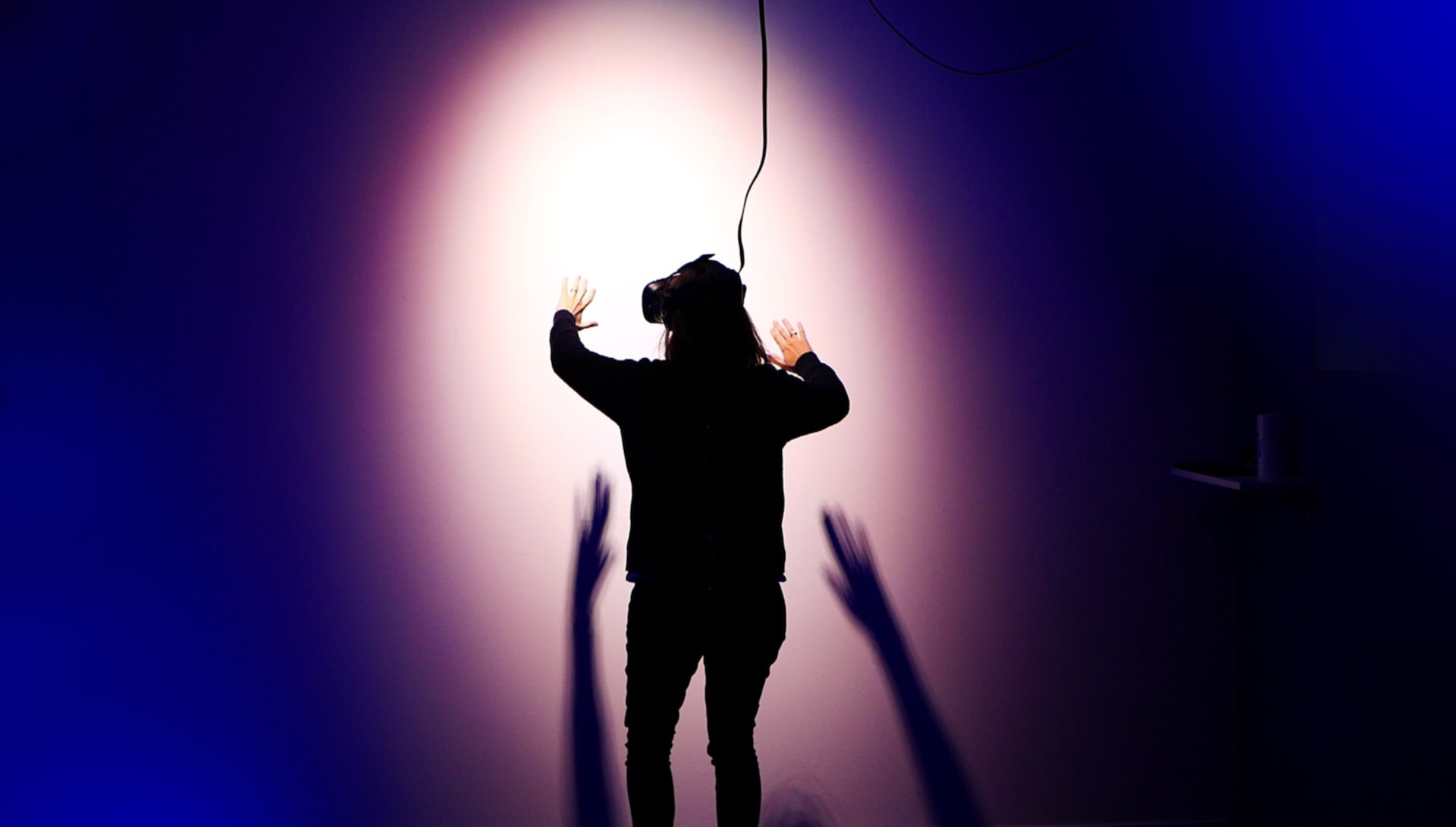

Researchers have recently developed a machine learning system that can predict hand movements in VR environments with stunning accuracy. This breakthrough has the potential to significantly enhance the VR experience and create new possibilities for training and rehabilitation applications.

Hand Tracking for Immersive Virtual Reality: where opportunities lie

Hand tracking has become an integral feature of recent generations of immersive virtual reality head-mounted displays.

With the widespread adoption of this feature, hardware engineers and software developers are faced with an exciting array of opportunities and a number of challenges, mostly in relation to the human user. There are several possibilities for hand tracking to add value to immersive virtual reality. Challenges are not out of questions – of course there appear to be some, in the context of the psychology and neuroscience of the human user. However, let’s stay focussed on the positive outcome and let’s explore the development of best practices in the field for the development of subsequent generations of hand tracking and virtual reality technologies.

Opportunities–Why Hand Tracking?

Our hands, with the dexterity afforded by our opposable thumbs, are one of the canonical features which separates us from non-human primates. We use our hands to gesture, feel, and interact with our environment almost every minute of our waking lives. When we are prevented from, or limited in, using our hands, we are profoundly impaired, with a range of once-mundane tasks becoming frustratingly awkward. Below, I briefly outline three significant potential benefits of having tracked hands in a virtual environment.

1–Increased Immersion and Presence

The degree to which a user can to perceive a virtual environment through the sensorimotor contingencies they would encounter in the physical environment is termed “immersion”.

The subjective experience of being in a highly-immersive virtual environment is known as “presence”, and recent empirical evidence suggests that being able to see one’s tracked hands animated in real time in a virtual environment is an extremely compelling method of engagement. Research has shown that we have an almost preternatural sense of our hand’s positions and shape when they are obscured and when our hands are removed from our visual worlds it is a stark reminder of our disembodiment. Indeed, we spend the majority of our time during various mundane tasks foveating our hands, so removing them from the visual scene presumably has a range of consequences for our visuomotor behaviour.

2–More Effective Interaction

The next point to raise is that of interaction. A key goal of virtual reality is to allow the user to interact with the computer-generated environment in a natural fashion. This interaction can be achieved in its simplest form by the user by moving their head to experience the wide visual world. More modern VR experiences, however, usually involve some form of manual interaction, from opening doors to wielding weapons. Accurate tracking of the hands potentially allows for far more precise interactions that would be possible with controllers, adding not only to the user’s immersion, but even the accuracy of their movements, which seems particularly key in the context of training.

3–More Effective Communication

The final point to discuss is that of communication, and in particular manual gesticulation–the use of one’s hands to emphasize words and punctuate sentences through a series of gestures. “Gestures” in the context of HCI has come to mean the swipes and pinching motions uses to perform commands. However, the involuntary movements of hands during natural communication appear to play a significant role not just for the listener, but also the communicator to such an extent that conversations between two congenitally blind individuals contain as many gestures as conversations between sighted individuals .

Indeed, recent research has shown that individuals are impaired in recognizing a number of key emotions in the images of bodies which have the hands removed, highlighting how important hand form information is in communicative experiences. The value of manual gestures for communication in virtual environments is compounded given that veridical real-time face tracking and visualization is technically very difficult due to the extremely high temporal and spatial resolution required to detect and track microexpressions. Furthermore, computer-generated faces are particularly prone to large uncanny-valley like effects whereby faces which fall just short of being realistic elicit a strong sense of unease. Significant recent strides have been made in tracking and rendering photorealistic faces, but the hardware costs are likely to be prohibitive for the current generation of consumer-based VR technologies. Tracking and rendering of the hands, with their large and expressive kinematics, should thus be strong a focus for communicative avatars in the short term.

Using deep neural networks for accurate hand-tracking

Researchers and engineers from Facebook Reality Labs and Oculus have developed what is, as of today, the only fully articulated hand-tracking system for VR that relies entirely on monochrome cameras. The system does not use active depth-sensing technology or any additional equipment (such as instrumented gloves). This technology will be deployed as a software update for Oculus Quest, the cable-free, stand-alone VR headset that is now available to consumers.

By using Quest’s four cameras in conjunction with new techniques in deep learning and model-based tracking, Facebook’s team achieved a larger interaction volume for hand-tracking than depth-based solutions do, and they did it at a fraction of the size, weight, power, and cost. Processing is done entirely on-device, and the system is optimized to support gestures for interaction, such as pointing and pinch to select.

How it works

Deep neural networks are used to predict the location of a person’s hands as well as landmarks, such as joints of the hands. These landmarks are then used to reconstruct a 26 degree-of-freedom pose of the person’s hands and fingers. The result is a 3D model that includes the configuration and surface geometry of the hand. APIs will enable developers to use these 3D models to enable new interaction mechanics in their apps or to drive a user interface.

Wrap up

As AI and VR continue to evolve and integrate, we can expect to see even more exciting developments in the future. It’s a thrilling time to be at the forefront of this technology, and we can’t wait to see what’s next.

Surely, hand tracking is probably here to stay as a cardinal (but probably still optional) feature of immersive virtual reality. The opportunities for facilitating effective and engaging interpersonal communication and more formal presentations in a remote context is particularly exciting for many aspects of our social, teaching, and learning worlds. Being cognisant of the challenges which come with these opportunities is a first step toward developing a clear series of best practices to aid in the development of the next generation of VR hardware and immersive experiences.

sources: David Buckingham on Frontiers I Facebook Reality Lab I JVDB Studios

Maker Faire Rome – The European Edition has been committed since its very first editions to make innovation accessible and usable to all, with the aim of not leaving anyone behind. Its blog is always updated and full of opportunities and inspiration for makers, makers, startups, SMEs and all the curious ones who wish to enrich their knowledge and expand their business, in Italy and abroad.

Follow us, subscribe to our newsletter: we promise to let just the right content for you to reach your inbox.